How to build Snowflake Cortex Agents using dbt and Cortex Code

Computers have been doing work for humans for decades. The fundamental loop has always been the same: a person has a …

In most of the applications with a batch cycle, IT and Business stakeholders will be interested to know the status of the batch cycle. It will be a cumbersome task to monitor the status of all cycles manually.

Here, let’s walk through an automated process to check the status of a given set of DataStage jobs and to create a formatted file with job status. This file can be used to email users with the status of the jobs. AuditDataStageJobLogs process has two parts

Script(AuditDataStageJobLogs.sh) to pull the last two entries of a given job.

DataStage job(AuditDataStageJobLogs.dsx) to process the output of AuditDataStageJobLogs.sh and to create a user friendly output file.

Source code for the .sh and .dsx can be downloaded from git repo datastage-examples

Now lets take a deep dive into each of this

Script uses IBM DataStage command “dsjob -logsum” to pull the log details

Script needs input directory in the same level as script is, with the input file in it. This is one of the prerequisite to run this script, The temp and logs directory will be auto created in same level as script

Script needs 2 parameters as input - ENVIRONMENT and INPUTFILE

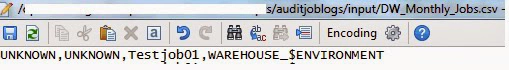

| Variable Name | Description |

|---|---|

| ENVIRONMENT | The environment or region code(could be DEV, TEST, PROD as per your shop) and this will be the dynamic part of project name |

| INPUTFILE | The list of all jobs and respective project names in below format( Please ignore the first two fields named UNKNOWN. We have been using it to hold the system name and scheduler job name so that the output can be summarized in various format) |

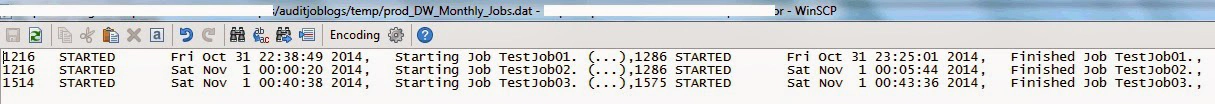

Output and Logs files of this script will be captured in temp and logs dir respectively at the same level, below is the snippet of a sample output file

Processes the output from AuditDataStageJobLogs.sh. The prerequisite is script should be complete with RC 0.

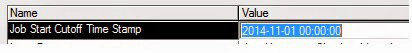

Requires a cutoff time stamp. This cutoff time stamp will be used to compare against job start time and end time to drive the cycle status.

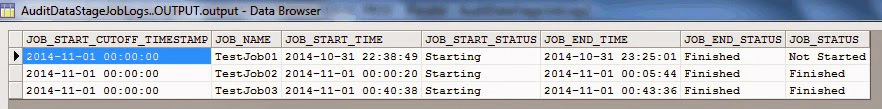

Below is a sample output file which has the derived JOB_STATUS based on the cutoff time stamp and output from the script.

Hope this was helpful. Did I miss something ? Let me know in the comments.

Computers have been doing work for humans for decades. The fundamental loop has always been the same: a person has a …

As data platforms grow, one of the most expensive patterns is duplicating raw data across multiple storage engines. If …